An Accountants views on Financial Systems and the Microsoft BI that goes along with them.

Thursday, 29 May 2008

Management Reporter - Scheduling Reports

She did not disappoint. They are in the final stages of a "solution" which they will make available to me in the next week. From what I can gather it allows you to schedule reports via the command line. This means you can schedule them using Task Scheduler or from SQL Server Agent. Either way the news is good.

As soon as I have it in my grubby little paws I will test and feedback.

- Paul Steynberg

Monday, 26 May 2008

The Rational Guide to PerformancePoint Server Planning - Not a Book Review

The book is written by Adrian Downes and Nick Barclay and assured to contain no bull by Microsoft's own Peter Bull, Principal Group Program Manager for PerformancePoint Server.

I would like to call this a book review but cannot as just reading the book would be like reading a murder mystery with the last chapters torn out. The authors casually point out that you can download bonus chapters from the website, but what they don't tell you is that this is where the gold is to be found. If you buy this book you MUST download the additional 4 chapters.

The book is well laid out and easy to read. There are highlighted notes, tech tips and cautionary announcements throughout and enough screen shots for illustration. Worth mentioning is Chapter 9, Integrating Business Data. This details the ETL process between LOB systems and PPS and from many posts on the forum this seems to be an area of concern for a lot of people.

Both authors are extremely active in the PPS community and this book shows their authority on the subject.

- Paul Steynberg

Thursday, 22 May 2008

Lies, Damned Lies and Statistics

So here I stand before you with the results of my survey. A resounding beating of the accountants by the techies by 17:2. (No Pareto Principle here.)

So do these statistics mean that I should abandon my accounting blog with a technical undertone? Absolutely not, because the statistics lie. I received a number of e-mails from the respondents who state that the accounting view is what makes this blog different from most other technical blogs. So, on that note I will continue as is, unabated by the statistics.

Thanks to all those who took the survey and took the time to contact me directly, really appreciate it. I leave you with this parting comic strip from Dilbert. What a classic!

- Paul Steynberg

Wednesday, 21 May 2008

Management Reporter Live Recording

https://www107.livemeeting.com/cc/msevents/view?id=BI27AL&role=attend&pw=EDK389

On my way to check it out. Also MS are about to give me a QFE for the performance issue. Will feedback on that as soon as I have results.

- Paul Steynberg

Monday, 19 May 2008

Shake the Trees Early

You may remember from my previous posts that we are moving from our current ERP system to Dynamics AX 2009 (Formerly 5.0 but re branded recently to fall in with Microsofts standards). As soon as we got the go ahead I put my hard hat on and ran into the tree, shoulder first.

So what do we mean but this. When one moves to a new ERP system, all the relevant data in the old one need to be migrated across. The very first place to start looking is subsidiary ledgers and control accounts. To illustrate this concept I am going to use Accounts Payable (AP) as my example. At any point in time all the open vouchers in the system should tie up to the AP ledger which in turn should balance to the General Ledger control account. It is also very important to start identifying how you can isolate the open/unpaid vouchers in your old system from an SQL script point of view. The outcome of this exercise will be that you should be able to clean up your data and have a full understanding of what needs to be extracted for the conversion.

I does not help to only balance up the subsidiary ledger. One should also look at the open/unpaid items and take a view on validity. As a yard stick I would suggest that any vouchers older than 6 months should be reviewed and taken care of prior to go live. In our situation I found a number of vouchers that were quite old but if you take the debit and credit memo's they balance to zero for a specific vendor. It thus came to light that zero payments were not being made on these nett-offs, resulting in an unusually high number of open items.

By making these numbers and transactions available to the accountants we have managed to clear a significant number of vouchers which will now not have to be taken on in the future.

A parting word of caution, always look up when shaking the tree, you need to side step the falling elephants that everybody can see but nobody wants to talk about.

- Paul Steynberg

Friday, 16 May 2008

Survey

Although I would love to promise the chance of winning a free holiday to Zimbabwe if you complete it, I am bound by a budget of, well nothing. So in return you will get my deepest thanks.

- Paul Steynberg

Thursday, 15 May 2008

Should You Read White Papers?

They are nothing more than thinly disguised glossy advertisements, commissioned or penned by the very people selling the product that comes blazing through the paper with more fanfare than the Oscars. They are disguised just enough to make the unsuspecting reader believe that it is an independent, unbiased and fair view of the products in question. This is like taking democratic advice from Robert Mugabe.

I have read a ton of white papers over the past year while trying to decipher fact from fiction in the ERP world. There are papers on how much better Oracle is than SAP, or SAP than Dynamics, or Dynamics than Lawson. The list is endless and if one believed them you would have to purchase them all or none at all.

So by all means go ahead and read them but question the source, independence and fairness. Here is a quote from http://www.freelancewritingsuccess.com/stelzner1.php

White papers help people make decisions and are actively sought after.

Consider that an astonishing 70% of information technology professionals rely on

white papers to make purchasing decisions in the U.S. (ITtoolbox, July 19,

2006).

That my friends, if true, is a disgrace to our profession.

- Paul SteynbergSunday, 11 May 2008

Leverage Your Existing Investment in Microsoft (Part I)

Under utilisation (Correctly spelled, see footnote 1) of one's current investment is probably one of the most common issues surrounding IT projects. This is especially true with Microsoft. Just as an example when I speak to other Managers about Analysis Services I am most often greeted with a look typically sported by the lesser spotted stunned mullet. It is quite unbelievable how many companies install an ERP system with SQL Server as the underlying database and have no idea that it is shipped with some great additional features such as Analysis Services and Reporting Services. To check what your version is shipped with go to: http://www.microsoft.com/sql/prodinfo/features/compare-features.mspx

Just as a quick FYI, Analysis Services is a framework that allows one to produce cubes for typically “slicing and dicing” ones data, often referred to as OLAP. Reporting Services is a tool that allows one to author and deliver reports from within a web environment. I am not going to go into much detail on these 2 gems (They will feature in a future blog), as the biggest culprit is the Office Environment, Excel being the ring leader.

Excel, Excel wherefore art though, a product that seems as old and familiar as Bill Shakespeare himself. Yip, I’m back here again, lurching on about Accountants and Excel, but you must understand, you just cannot separate them. So if this is true then why do so many Financial Systems not ship with Excel tools as standard. I had this happen to me some years back with our current system. The original decision makers who attended the demonstrations on the product were amazed by how easy the information could be pulled into Excel. However when it came time to actually implement this great functionality we were told that it costs “extra” and we were not licensed. Not just a little, but a lot extra.

Act II – In walks my stubborn alter ego, has a look at the product and says “We can write something like that for free”.

Evil Vendor – Ah, but our product is fully integrated with securities etc.

Stubborn Alter Ego – Big deal.

And so our Excel integration was born a few weeks later with a bit of help from our Database guru. We only worked on this from time to time when we needed a break from our daily routine and if we had to work out the actual man hours we would estimate that it was no more than 40 hours in total.

We now run 7 Excel based extracts from our Financial Systems, 1 Fixed Assets, 2 Accounts Payable and 4 General Ledger. We benchmarked them against the products supplied by our vendor and found that on average they ran 20 times faster. Not kidding, 20 times faster. How was this achieved? There are only 2 questions to be answered. Firstly is the person making the query allowed access to the data and secondly where is the data.

The first question can be answered by finding the security related tables within the database. One normally starts with finding the user table which holds the user id, name and other details related to the user. From here you then link to tables that associate the user with different entities and then profiles/menus/accounts etc etc. You need to understand how the security is set up in order to make the connections. A little bit of digging and you will work it out.

Once you understand the security model you then need to find out where the data is that is required. In my example of one of our Excel extracts we extract the balances of certain accounts chased down from a higher level account into several columns based on some criteria. Below is screenshot of the criteria that the users would enter in order to get the information. This particular extract will look to the account master table in order to return the account description and also the account balance table in order to retrieve the balances for the selected periods.

Most of the sheet is protected which gives the user limited input. Everything in grey cannot be changed, only the white blocks can be. Each of the input fields has a comment attached to it for on-line help and most of them are validated so that users cannot capture invalid information. In reality the users will complete the input fields and save the spreadsheet for repeated usage.

Once all the fields are updated the user then clicks the “Run Extract” button. This button has some Visual Basic code behind it which is also password protected. Once clicked the code traverses through the input fields and passes them as parameters to a Stored Procedure in SQL Server which in turn returns a data set and populates the data sheets.

Here is a step by step explanation of how this particular extract works.

The amount columns from 1 through 6 represent 6 different columns of data for each account that can be extracted and can change for each column based on the fiscal year, the scenario (Actual/Budget/Forecast) the currency (drop down validated to the currency table), Currency type (Also drop down and validated), from and to periods. I have not had anyone ask for more than the 6 comparative columns yet so one can assume that it is a safe number.

The accounts are then entered. If the account is a parent account you can specify the chase level which is just how many accounts down from this account do you want to display. The show levels switch if set to “N” will only show the accounts at the bottom of the chase and if set to “Y” will show the original account chased and all its subordinates down to the chase level. The New Sheet switch if set to “N” will return the data set to the DataDefault Sheet and if set to “Y” will create a new sheet for each account line’s data set that is fetched. Each sheet will be named with the account number.

There is setup information higher up for the Server Name, Database Name and version number of this particular extract. I have not shown this for obvious security reasons.

Now once the user hits the “Run Extract” button the VB Code collects the input data and builds a string that eventually gets sent to the server and database in the set up information as a stored procedure.

The stored procedure accepts the parameters and now goes through a set of validation procedures starting with the user access. When the user calls the stored procedure their domain and user name are available for the procedure to use in order to compare to the security settings within the product. If the user is allowed to see this org and the account then one allows the procedure to continue. If not we return an error message indicating lack of security. As in our case you may have to create a security link table that holds the Windows full domain name of the user logged in and then the user name of the user within the Financial System. If your system is not Windows Security compatible you may have a spare field in the user table to store the windows login.

Once the data sets are returned the VB script does a whole lot of quick steps such as:

Format the numbers columns correctly

Correct the column sizes to fit the data

Put in some totals

Some procedures done before the data is returned are:

Clear the data area to be populated

Remove and formatting including subtotals etc.

Delete any sheets that are not in the original sheet. (We have made provision for specially named sheets that are never deleted so the user can link them up for other purposes etc.)

Some of my other extracts are even more elaborate. For example the AP Voucher extract allows one to return the entire vendor master into Excel for an org, by double clicking the vendor line you return all vouchers from the system in another sheet. By double clicking a voucher one can return the lines that make up the entire voucher and even further down to the distribution etc. Our users just LOVE it, no need to navigate the application if you can just double click in an Excel sheet.

The point of this blog was merely to demonstrate that we must seek out ways to maximise our Microsoft investment and to challenge the hold that some vendors appear to have over us. In my next session on this I will highlight Analysis Services and how this product can make a full grown Accountant weak at the knees.

- Paul Steynberg

Footnote 1

An excerpt from a letter supposedly penned by John Cleese to the citizens of the Unites States of America: You should look up "revocation" in the Oxford English Dictionary. Then look up "aluminium". Check the pronunciation guide. You will be amazed at just how wrongly you have been pronouncing it. The letter 'U' will be reinstated in words such as 'favour' and 'neighbour', skipping the letter 'U' is nothing more than laziness on your part. Likewise, you will learn to spell 'doughnut' without skipping half the letters. You will end your love affair with the letter 'Z' (pronounced 'zed' not 'zee') and the suffix "ize" will be replaced by the suffix "ise". You will learn that the suffix 'burgh is pronounced 'burra' e.g. Edinburgh. You are welcome to respell Pittsburgh as 'Pittsberg' if you can't cope with correct pronunciation. Generally, you should raise your vocabulary to acceptable levels. Look up "vocabulary". Using the same twenty seven words interspersed with filler noises such as "like" and "you know" is an unacceptable and inefficient form of communication. Look up "interspersed". There will be no more 'bleeps' in the Jerry Springer show. If you're not old enough to cope with bad language then you shouldn't have chat shows. When you learn to develop your vocabulary then you won't have to use bad language as often.A rebuttal to this can be found at http://www.authorsden.com/categories/article_top.asp?catid=19&id=26498

Friday, 9 May 2008

PerformancePoint Server and the Art of Debits and Credits

Back to PPS, if you load your trial balance into the product with everything correctly signed, you will find that your trial balance when viewed from something like an Excel Pivot Table does not add up. So what has happened?

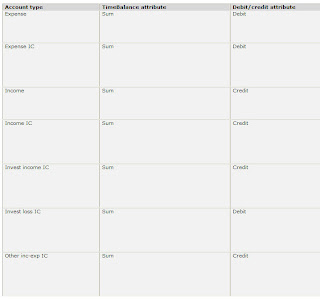

The answer is that the Account Dimension in the Planning Business Modeler has intelligence built into it. When you define an account you must also define the account type. Associated with the account type are time balance attributes as debit/credit attributes. In the screen shot below from the help file we can see what Microsoft attribute associations for income statement accounts.

So this tells us that for expenses the debit/credit attribute is debit and the incomes are credit. Just like the Prof at University said. As a result of this intelligence you must therefore be careful when loading your trial balance as you must switch the signs for all those accounts that you would normally show as negatives but have credit attributes in the account type.

The non-accountant likes to see sales and expenses and positive numbers because he subtracts one from the other. The account likes to see sales and a negative and expenses as a positive and adds them.

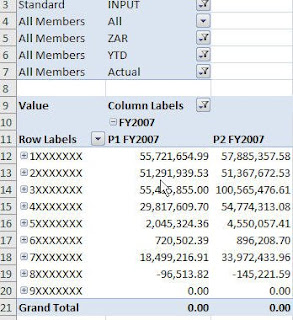

Below is an example of how the back ground intelligence works. The account series 1 are assets, 2 are liabilities, 3 sales, 4 cost of sales, 5 through 9 expenses. An account looking at this table would just add the whole lot up and inform you that it does not cast. The pivot table in Excel is reading from the cube directly and as you can see comes back to the holy grail of trial balances, ZERO.

Score? One for the man in the street and zero to the accountants.

- Paul Steynberg

The Flow Dimension for PerformancePoint Server Planning

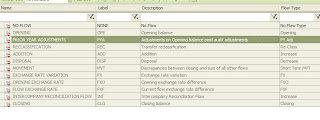

A number of people new to the product do not understand what this dimension is used for and initially it does not really seem to be relevant. It's relevance however becomes transparent when you try and report on your financials. The standard dimensions cannot be deleted and here is a list of them on the left from the Planning Business Modeler (PBM).

A number of people new to the product do not understand what this dimension is used for and initially it does not really seem to be relevant. It's relevance however becomes transparent when you try and report on your financials. The standard dimensions cannot be deleted and here is a list of them on the left from the Planning Business Modeler (PBM).To make it easier to understand just focus on 4 of the dimension members being NONE (None), OPE (Opening Balance), MVT (Movement) and CLO (Closing Balance). Now put them into perspective when looking at your trial balance. All income statement type accounts start each fiscal year with no value and accumulate until the end of the year. Each fiscal period has movement and one normally views them as monthly, year to date, quarterly etc etc. Balance sheet items on the other hand start every fiscal year with the closing balance from the prior fiscal year. They also have monthly movement and are normally viewed as at a point in time which in accounting terms is the closing balance. So to summarise, profit and loss (income statement) accounts have periodic movement and balance sheet accounts have an opening balance, periodic movement and a closing balance.

The next question you ask is the how do they tie up to the Flow Dimension and how does one load your current General Ledger (GL) information into PPS using them. Simple, load all income statement accounts with a Flow of NONE and load all balance sheet accounts with the closing balance (CLO) for each period. PPS then needs to calculate and populate the opening balances and periodic movement for the balance sheet accounts.

If you go to the business rules for the model and look under "System Defined Procedural Rules" you will find several rules that do just this. The first rule is called "Chart_CarryForward" and the second "Chart_GAP_Movement". These rules require parameters in order to run which are:

- Scenario

- Entity

- StartTimePeriod

- EndTimePeriod

- BusinessProcess

These calculations create records in the MG table for the model and when the cube is refreshed they appear for reporting purposes.

One may then question how do you arrive at YTD or CompareToLastYear totals for income statement accounts if you only have a Flow on NONE. This is calculated in the cube only under the definition rules called "TimeDataView Calculation". This rule will distinguish between balance sheet and income statement accounts and properly calculate what the YTD or comparative value are.

By using the combinations of the flow and the timedataview dimensions from within a tool such as Management Reporter one can create some complex reporting solutions.

- Paul Steynberg

What Happens if you Get Hit by a Truck?

I am going to go with mixed mode on this one. (The SQL guys will get that one.) The Financial Systems Manager is responsible for the backups but the Networks Team performs them. BUT who checks and tests them? In our environment we do. We regularly restore the Financial Databases to alternate locations and connect to them using our test installation. Why is this done? A very hard lesson was learned many years ago.

I had just started to work as a permanent employee for the company that I was contracted to. The Networks Department were quite proud of the backup strategy and assured us that they were backing up our database every evening. So we just continued with our day to day lives and never thought of it again. Then the unthinkable happened. During a re-index of certain tables the database was corrupted. (Ingres 1.2 not SQL). No problem we'll just restore to the previous nights backup and all will be well.

This is when the fabric of the know universe started to unravel. The backup operator had missed a warning to say that one of the files were locked and could not be backed up. Without going into the detail of how Ingres was being backed up, needless to say, we could not restore the database. So we started to go back in time through all the backups to find the last "good" one. It was 13 days prior! What ensued makes Stephen Hawkins and his space theories look like child's play. 48 hours later, sleep deprived and looking like Arthur Philip Dent after watching the destruction of earth, all was recovered and all systems were in sync.

We learned a lot from this hard lesson. First and foremost do not just take the word of your IT department that things are being backed up. Test them and test them regularly. The second item of concern that came to the fore was how much information about the systems was retained in my head. We had good documentation but more was needed. From this exercise we have developed one of the most comprehensive disaster recovery plans that I have seen.

I have lever arch files, stored in 2 locations, that contains amongst other things the following:

- Hardware setup

- Operating System version and setup

- Folder trees, permissions and shares

- Drive letters and space requirements

- User groups and users

- SQL Setup

- Printer setup with drivers (some systems are case sensitive, got caught on this one)

This file also has the disks stored in a sleeve with all the registry keys. The installation guide has screen shots and important notes at every step. It has been tested by a randomly picked person who had no prior knowledge of our system.

There are 2 types of IT people, those who have lost data, and those that will lose data. I cannot stress enough the importance of proper backup and recovery procedures.

So you may ask what does this have to do with the title of my blog? Because in 2000 while training for an ultra marathon early one morning I was hit by a truck. Luckily I survived but what if I had not?

- Paul Steynberg

Thursday, 8 May 2008

The Financial System Manager's Toolbox (Part I)

Excel - Without a doubt the corner stone of every accountants existence. Take this away and you risk imploding the known universe.

Word - Pretty obvious but essential for documenting. We make sure that every system is fully documented with backup and recovery plans. Hand in hand with documentation comes screen shots and this is where a tool such as Snag-It comes into play. A must have in order to make your documentation "idiot" proof. Once you have finished your document it is a good idea to save it as a pdf so that it becomes difficult for others to tamper with it. Microsoft has an add-in for the Office Suite which saves to pdf or xps. You can find it here.

Text Editor - I use EditPad. Especially useful when you receive and or send files between PC based technology and mainframes etc. It allows you to check record lengths, convert between Windows, UNIX and Mac, change cases and a whole lot more. Very useful tool.

SQL Editor - The current version of SQL Server Management Studio does not provide intellisense or auto complete. It also does not allow you to save your scripts in an orderly searchable fashion. I use Speed SQL IDE from Quest Software (which is no longer supported) as it provides all this functionality required and saves versions of your scripts in a central repository which can be backed up daily. They have replaced it with Toad, which I do not like. You can also use a tool called SQL Prompt from Red-gate. It works within SQL Server Management Studio and Visual Studio.

Backups - All our Financial System have been consolidated to our standard database, SQL Server. Sometime back I started to run out of disk space and also window time for backups. We found a product, also from Quest Software, called LiteSpeed. Now this is a product that saved my bacon. Our backups were taking 1,5 hours and size on disk of 80Gb's for our main ERP system. After installing LiteSpeed the backup times reduced to less than 30 minutes and the files size only 10Gb's. It compresses on the fly as it backs up. An absolute winner, saves you time and cost of additional disk space.

Text File Searching - A number of older style ERP systems produce files for every process within the business. These files are mainly text based and for example can be log files and spool files. Now in the past if things did not go according to plan these files would contain error messages or warnings. If the person in charge of the system was not aware of any problems they may go unchecked. These files were also produced during interfaces between systems and the only way to make sure that they were successful was to troll through the files and make sure that they were OK. A massive task if you have as many orgs as we do with as many interfaces running daily. I could not find anything to do this job automatically back in 2000 so speced and had my own application written by a Delphi programmer. It still works today and saves my guys hours. One sets up a search location, a file mask for the files being searched, what you are looking for or not looking for, a location for the file to be copied or moved to, an SMS number and message if you need to SMS someone etc. It was written as a service or to be interactive. Love it!

All the above tools combined assist myself and my team in maintaining our Financial Systems environment to a very high standard. Maybe one or two of them are just what you are looking for.

In Part II I will touch on more specific tools such as MS Integration Services, Analysis Services and Reporting Services.

- Paul Steynberg

Wednesday, 7 May 2008

PerformancePoint Server - Management Reporter

In short it is a tool for writing financial reports which looks like it has been designed by accountants for accountants. It comprises 3 elements, the designer, the viewer and the database that stores the report layouts and data. From within the designer one creates reports using a "building block" method. The rows, columns and reporting trees are defined and then pulled together in a report definition. This methodology allows a tremendous amount of re-usability and flexibility. All terribly easy to use. I had no Frx experience and read the help file which has a mini tutorial look and feel about it and within a few hours had reproduced our Income Statement with some complex calculations for PMO and GP etc etc.

One can publish the reports to MS Reporting Server, export it to Excel or just view them in the MR Viewer. Facilities exist to e-mail the report to users as well.

I have been using it for the past 6 months or so and one does need to be aware of certain issues surrounding the installation and usage thereof.

Installing

If you try and install the database remotely you will get an error. You must actually install the product on the server that houses the Management Reporter (MR) database. Only once you have done this can you install the client on your desktop. The documentation is not entirely clear on this.

Another "gotcha" is that you must have the PPS Excel Client (Planning Business Client) installed on your PC in order for MR to work.

PPS Hierarchies

If you thought that your PPS account and entity hierarchies were going to be respected you will be disappointed. The pop up selection box for accounts and hierarchies does not distinguish between those accounts that are at the leaf level or those that are parents and thus summary level accounts. If your accounts do not have a distinguishing feature within the account naming you will be lost.

PPS Member Properties

Something else I found a bit lacking was that none of the member properties that one creates for dimensions within PPS are exposed to MR for reporting purposes. This means that if you require them for your financial reporting make sure that they are full dimensions. I got caught on this with my entities. I created a member property called Country but could not use this in MR. I recreated the model and made the Country a full dimension. It is now available in MR for reporting on.

Performance

Performance is something that Microsoft are addressing as an issue from my side. MR has been re-written to pull from PPS and it uses MDX to bring back the data from the model cubes. For some reason the performance is not up to scratch. We are hoping for a solution shortly.

Report Generation

The current version only allows report generation one at a time. You cannot select multiple reports and run them simultaneously. You also cannot run them unattended on a schedule. Microsoft are not sure when this type of functionality will be included.

Multiple Reports on the Same Page

It is not possible to create a number of reports and have them co-exist on the same page. This is something that all Management Accountants will curse.

Conclusion

All in all this product looks neat and should just about cover most of the reports required by the Accountants.

I will teaching some Accountants how to use the product over the next few weeks and will report back on that exercise.

- Paul Steynberg

Why I started this Blog?

I have been through 2 implementations of ERP Systems and am embarking on my third which will be Dynamics AX 2009 (AX 5.0). I have worked on both sides of the fence, as client and consultant, and have managed my employers ERP Systems for the past 8 years.

During my time here I have also been fortunate enough to manage the Database Design and Development Team. This position brought about an incredible learning curve for me from both a technical and managerial aspect.

Many lessons have been learned and I hope to share them on this blog.

- Paul Steynberg